mirror of

https://gitgud.io/reanon/nonono.git

synced 2026-05-10 23:40:12 -07:00

lmao (glm+qwen newest addition)

This commit is contained in:

@@ -0,0 +1,34 @@

|

||||

*.7z filter=lfs diff=lfs merge=lfs -text

|

||||

*.arrow filter=lfs diff=lfs merge=lfs -text

|

||||

*.bin filter=lfs diff=lfs merge=lfs -text

|

||||

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

||||

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

||||

*.ftz filter=lfs diff=lfs merge=lfs -text

|

||||

*.gz filter=lfs diff=lfs merge=lfs -text

|

||||

*.h5 filter=lfs diff=lfs merge=lfs -text

|

||||

*.joblib filter=lfs diff=lfs merge=lfs -text

|

||||

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

||||

*.model filter=lfs diff=lfs merge=lfs -text

|

||||

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

||||

*.npy filter=lfs diff=lfs merge=lfs -text

|

||||

*.npz filter=lfs diff=lfs merge=lfs -text

|

||||

*.onnx filter=lfs diff=lfs merge=lfs -text

|

||||

*.ot filter=lfs diff=lfs merge=lfs -text

|

||||

*.parquet filter=lfs diff=lfs merge=lfs -text

|

||||

*.pb filter=lfs diff=lfs merge=lfs -text

|

||||

*.pickle filter=lfs diff=lfs merge=lfs -text

|

||||

*.pkl filter=lfs diff=lfs merge=lfs -text

|

||||

*.pt filter=lfs diff=lfs merge=lfs -text

|

||||

*.pth filter=lfs diff=lfs merge=lfs -text

|

||||

*.rar filter=lfs diff=lfs merge=lfs -text

|

||||

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

||||

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

||||

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.tflite filter=lfs diff=lfs merge=lfs -text

|

||||

*.tgz filter=lfs diff=lfs merge=lfs -text

|

||||

*.wasm filter=lfs diff=lfs merge=lfs -text

|

||||

*.xz filter=lfs diff=lfs merge=lfs -text

|

||||

*.zip filter=lfs diff=lfs merge=lfs -text

|

||||

*.zst filter=lfs diff=lfs merge=lfs -text

|

||||

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

||||

+11

@@ -0,0 +1,11 @@

|

||||

.aider*

|

||||

.env*

|

||||

!.env.vault

|

||||

.venv

|

||||

.vscode

|

||||

.idea

|

||||

build

|

||||

greeting.md

|

||||

node_modules

|

||||

.windsurfrules

|

||||

http-client.private.env.json

|

||||

@@ -0,0 +1,4 @@

|

||||

#!/usr/bin/env sh

|

||||

. "$(dirname -- "$0")/_/husky.sh"

|

||||

|

||||

npm run type-check

|

||||

+13

@@ -0,0 +1,13 @@

|

||||

{

|

||||

"plugins": ["prettier-plugin-ejs"],

|

||||

"overrides": [

|

||||

{

|

||||

"files": "*.ejs",

|

||||

"options": {

|

||||

"printWidth": 120,

|

||||

"bracketSameLine": true

|

||||

}

|

||||

}

|

||||

],

|

||||

"trailingComma": "es5"

|

||||

}

|

||||

@@ -0,0 +1,72 @@

|

||||

# OAI Reverse Proxy - just a shitty fork

|

||||

Reverse proxy server for various LLM APIs.

|

||||

|

||||

### Table of Contents

|

||||

<!-- TOC -->

|

||||

* [OAI Reverse Proxy](#oai-reverse-proxy)

|

||||

* [Table of Contents](#table-of-contents)

|

||||

* [What is this?](#what-is-this)

|

||||

* [Features](#features)

|

||||

* [Usage Instructions](#usage-instructions)

|

||||

* [Personal Use (single-user)](#personal-use-single-user)

|

||||

* [Updating](#updating)

|

||||

* [Local Development](#local-development)

|

||||

* [Self-hosting](#self-hosting)

|

||||

* [Building](#building)

|

||||

* [Forking](#forking)

|

||||

<!-- TOC -->

|

||||

|

||||

## What is this?

|

||||

This project allows you to run a reverse proxy server for various LLM APIs.

|

||||

|

||||

## Features

|

||||

- [x] Support for multiple APIs

|

||||

- [x] [OpenAI](https://openai.com/)

|

||||

- [x] [Anthropic](https://www.anthropic.com/)

|

||||

- [x] [AWS Bedrock](https://aws.amazon.com/bedrock/) (Claude4 is fucked, dont care)

|

||||

- [x] [Vertex AI (GCP)](https://cloud.google.com/vertex-ai/)

|

||||

- [x] [Google MakerSuite/Gemini API](https://ai.google.dev/)

|

||||

- [x] [Azure OpenAI](https://azure.microsoft.com/en-us/products/ai-services/openai-service)

|

||||

- [x] Translation from OpenAI-formatted prompts to any other API, including streaming responses

|

||||

- [x] Multiple API keys with rotation and rate limit handling

|

||||

- [x] Basic user management

|

||||

- [x] Simple role-based permissions

|

||||

- [x] Per-model token quotas

|

||||

- [x] Temporary user accounts

|

||||

- [x] Event audit logging

|

||||

- [x] Optional full logging of prompts and completions

|

||||

- [x] Abuse detection and prevention

|

||||

- [x] IP address and user token model invocation rate limits

|

||||

- [x] IP blacklists

|

||||

- [x] Proof-of-work challenge for access by anonymous users

|

||||

|

||||

## Usage Instructions

|

||||

If you'd like to run your own instance of this server, you'll need to deploy it somewhere and configure it with your API keys. A few easy options are provided below, though you can also deploy it to any other service you'd like if you know what you're doing and the service supports Node.js.

|

||||

|

||||

### Personal Use (single-user)

|

||||

If you just want to run the proxy server to use yourself without hosting it for others:

|

||||

1. Install [Node.js](https://nodejs.org/en/download/) >= 18.0.0

|

||||

2. Clone this repository

|

||||

3. Create a `.env` file in the root of the project and add your API keys. See the [.env.example](./.env.example) file for an example.

|

||||

4. Install dependencies with `npm install`

|

||||

5. Run `npm run build`

|

||||

6. Run `npm start`

|

||||

|

||||

#### Updating

|

||||

You must re-run `npm install` and `npm run build` whenever you pull new changes from the repository.

|

||||

|

||||

#### Local Development

|

||||

Use `npm run start:dev` to run the proxy in development mode with watch mode enabled. Use `npm run type-check` to run the type checker across the project.

|

||||

|

||||

### Self-hosting

|

||||

[See here for instructions on how to self-host the application on your own VPS or local machine and expose it to the internet for others to use.](./docs/self-hosting.md)

|

||||

|

||||

**Ensure you set the `TRUSTED_PROXIES` environment variable according to your deployment.** Refer to [.env.example](./.env.example) and [config.ts](./src/config.ts) for more information.

|

||||

|

||||

## Building

|

||||

To build the project, run `npm run build`. This will compile the TypeScript code to JavaScript and output it to the `build` directory. You should run this whenever you pull new changes from the repository.

|

||||

|

||||

Note that if you are trying to build the server on a very memory-constrained (<= 1GB) VPS, you may need to run the build with `NODE_OPTIONS=--max_old_space_size=2048 npm run build` to avoid running out of memory during the build process, assuming you have swap enabled. The application itself should run fine on a 512MB VPS for most reasonable traffic levels.

|

||||

|

||||

## Forking

|

||||

If you are forking the repository on GitGud, you may wish to disable GitLab CI/CD or you will be spammed with emails about failed builds due not having any CI runners. You can do this by going to *Settings > General > Visibility, project features, permissions* and then disabling the "CI/CD" feature.

|

||||

@@ -0,0 +1,21 @@

|

||||

stages:

|

||||

- build

|

||||

|

||||

build_image:

|

||||

stage: build

|

||||

image:

|

||||

name: gcr.io/kaniko-project/executor:debug

|

||||

entrypoint: [""]

|

||||

script:

|

||||

- |

|

||||

if [ "$CI_COMMIT_REF_NAME" = "main" ]; then

|

||||

TAG="latest"

|

||||

else

|

||||

TAG=$CI_COMMIT_REF_NAME

|

||||

fi

|

||||

- echo "Building image with tag $TAG"

|

||||

- BASE64_AUTH=$(echo -n "$DOCKER_HUB_USERNAME:$DOCKER_HUB_ACCESS_TOKEN" | base64)

|

||||

- echo "{\"auths\":{\"https://index.docker.io/v1/\":{\"auth\":\"$BASE64_AUTH\"}}}" > /kaniko/.docker/config.json

|

||||

- /kaniko/executor --context $CI_PROJECT_DIR --dockerfile $CI_PROJECT_DIR/docker/ci/Dockerfile --destination docker.io/khanonci/oai-reverse-proxy:$TAG --build-arg CI_COMMIT_REF_NAME=$CI_COMMIT_REF_NAME --build-arg CI_COMMIT_SHA=$CI_COMMIT_SHA --build-arg CI_PROJECT_PATH=$CI_PROJECT_PATH

|

||||

only:

|

||||

- main

|

||||

@@ -0,0 +1,22 @@

|

||||

FROM node:18-bullseye-slim

|

||||

|

||||

WORKDIR /app

|

||||

COPY . .

|

||||

|

||||

RUN npm ci

|

||||

RUN npm run build

|

||||

RUN npm prune --production

|

||||

|

||||

EXPOSE 7860

|

||||

ENV PORT=7860

|

||||

ENV NODE_ENV=production

|

||||

|

||||

ARG CI_COMMIT_REF_NAME

|

||||

ARG CI_COMMIT_SHA

|

||||

ARG CI_PROJECT_PATH

|

||||

|

||||

ENV GITGUD_BRANCH=$CI_COMMIT_REF_NAME

|

||||

ENV GITGUD_COMMIT=$CI_COMMIT_SHA

|

||||

ENV GITGUD_PROJECT=$CI_PROJECT_PATH

|

||||

|

||||

CMD [ "npm", "start" ]

|

||||

@@ -0,0 +1,17 @@

|

||||

# Before running this, create a .env and greeting.md file.

|

||||

# Refer to .env.example for the required environment variables.

|

||||

# User-generated content is stored in the data directory.

|

||||

# When self-hosting, it's recommended to run this behind a reverse proxy like

|

||||

# nginx or Caddy to handle SSL/TLS and rate limiting. Refer to

|

||||

# docs/self-hosting.md for more information and an example nginx config.

|

||||

version: '3.8'

|

||||

services:

|

||||

oai-reverse-proxy:

|

||||

image: khanonci/oai-reverse-proxy:latest

|

||||

ports:

|

||||

- "127.0.0.1:7860:7860"

|

||||

env_file:

|

||||

- ./.env

|

||||

volumes:

|

||||

- ./greeting.md:/app/greeting.md

|

||||

- ./data:/app/data

|

||||

@@ -0,0 +1,15 @@

|

||||

FROM node:18-bullseye-slim

|

||||

RUN apt-get update && \

|

||||

apt-get install -y git

|

||||

RUN git clone https://gitgud.io/khanon/oai-reverse-proxy.git /app

|

||||

WORKDIR /app

|

||||

RUN chown -R 1000:1000 /app

|

||||

USER 1000

|

||||

RUN npm install

|

||||

COPY Dockerfile greeting.md* .env* ./

|

||||

RUN npm run build

|

||||

EXPOSE 7860

|

||||

ENV NODE_ENV=production

|

||||

# Huggigface free VMs have 16GB of RAM so we can be greedy

|

||||

ENV NODE_OPTIONS="--max-old-space-size=12882"

|

||||

CMD [ "npm", "start" ]

|

||||

@@ -0,0 +1,26 @@

|

||||

# syntax = docker/dockerfile:1.2

|

||||

|

||||

FROM node:18-bullseye-slim

|

||||

RUN apt-get update && \

|

||||

apt-get install -y curl

|

||||

|

||||

# Unlike Huggingface, Render can only deploy straight from a git repo and

|

||||

# doesn't allow you to create or modify arbitrary files via the web UI.

|

||||

# To use a greeting file, set `GREETING_URL` to a URL that points to a raw

|

||||

# text file containing your greeting, such as a GitHub Gist.

|

||||

|

||||

# You may need to clear the build cache if you change the greeting, otherwise

|

||||

# Render will use the cached layer from the previous build.

|

||||

|

||||

WORKDIR /app

|

||||

ARG GREETING_URL

|

||||

RUN if [ -n "$GREETING_URL" ]; then \

|

||||

curl -sL "$GREETING_URL" > greeting.md; \

|

||||

fi

|

||||

COPY . .

|

||||

RUN npm install

|

||||

RUN npm run build

|

||||

RUN --mount=type=secret,id=_env,dst=/etc/secrets/.env cat /etc/secrets/.env >> .env

|

||||

EXPOSE 10000

|

||||

ENV NODE_ENV=production

|

||||

CMD [ "npm", "start" ]

|

||||

Binary file not shown.

|

After Width: | Height: | Size: 4.2 KiB |

Binary file not shown.

|

After Width: | Height: | Size: 153 KiB |

Binary file not shown.

|

After Width: | Height: | Size: 22 KiB |

Binary file not shown.

|

After Width: | Height: | Size: 36 KiB |

@@ -0,0 +1,245 @@

|

||||

|

||||

openapi: 3.0.0

|

||||

info:

|

||||

version: 1.0.0

|

||||

title: User Management API

|

||||

paths:

|

||||

/admin/users:

|

||||

get:

|

||||

summary: List all users

|

||||

operationId: getUsers

|

||||

responses:

|

||||

"200":

|

||||

description: A list of users

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

type: object

|

||||

properties:

|

||||

users:

|

||||

type: array

|

||||

items:

|

||||

$ref: "#/components/schemas/User"

|

||||

count:

|

||||

type: integer

|

||||

format: int32

|

||||

post:

|

||||

summary: Create a new user

|

||||

operationId: createUser

|

||||

requestBody:

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

oneOf:

|

||||

- type: object

|

||||

properties:

|

||||

type:

|

||||

type: string

|

||||

enum: ["normal", "special"]

|

||||

- type: object

|

||||

properties:

|

||||

type:

|

||||

type: string

|

||||

enum: ["temporary"]

|

||||

expiresAt:

|

||||

type: integer

|

||||

format: int64

|

||||

tokenLimits:

|

||||

$ref: "#/components/schemas/TokenCount"

|

||||

responses:

|

||||

"200":

|

||||

description: The created user's token

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

type: object

|

||||

properties:

|

||||

token:

|

||||

type: string

|

||||

put:

|

||||

summary: Bulk upsert users

|

||||

operationId: bulkUpsertUsers

|

||||

requestBody:

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

type: object

|

||||

properties:

|

||||

users:

|

||||

type: array

|

||||

items:

|

||||

$ref: "#/components/schemas/User"

|

||||

responses:

|

||||

"200":

|

||||

description: The upserted users

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

type: object

|

||||

properties:

|

||||

upserted_users:

|

||||

type: array

|

||||

items:

|

||||

$ref: "#/components/schemas/User"

|

||||

count:

|

||||

type: integer

|

||||

format: int32

|

||||

"400":

|

||||

description: Bad request

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

type: object

|

||||

properties:

|

||||

error:

|

||||

type: string

|

||||

|

||||

/admin/users/{token}:

|

||||

get:

|

||||

summary: Get a user by token

|

||||

operationId: getUser

|

||||

parameters:

|

||||

- name: token

|

||||

in: path

|

||||

required: true

|

||||

schema:

|

||||

type: string

|

||||

responses:

|

||||

"200":

|

||||

description: A user

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

$ref: "#/components/schemas/User"

|

||||

"404":

|

||||

description: Not found

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

type: object

|

||||

properties:

|

||||

error:

|

||||

type: string

|

||||

put:

|

||||

summary: Update a user by token

|

||||

operationId: upsertUser

|

||||

parameters:

|

||||

- name: token

|

||||

in: path

|

||||

required: true

|

||||

schema:

|

||||

type: string

|

||||

requestBody:

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

$ref: "#/components/schemas/User"

|

||||

responses:

|

||||

"200":

|

||||

description: The updated user

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

$ref: "#/components/schemas/User"

|

||||

"400":

|

||||

description: Bad request

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

type: object

|

||||

properties:

|

||||

error:

|

||||

type: string

|

||||

delete:

|

||||

summary: Disables the user with the given token

|

||||

description: Optionally accepts a `disabledReason` query parameter. Returns the disabled user.

|

||||

parameters:

|

||||

- in: path

|

||||

name: token

|

||||

required: true

|

||||

schema:

|

||||

type: string

|

||||

description: The token of the user to disable

|

||||

- in: query

|

||||

name: disabledReason

|

||||

required: false

|

||||

schema:

|

||||

type: string

|

||||

description: The reason for disabling the user

|

||||

responses:

|

||||

'200':

|

||||

description: The disabled user

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

$ref: '#/components/schemas/User'

|

||||

'400':

|

||||

description: Bad request

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

type: object

|

||||

properties:

|

||||

error:

|

||||

type: string

|

||||

'404':

|

||||

description: Not found

|

||||

content:

|

||||

application/json:

|

||||

schema:

|

||||

type: object

|

||||

properties:

|

||||

error:

|

||||

type: string

|

||||

components:

|

||||

schemas:

|

||||

TokenCount:

|

||||

type: object

|

||||

properties:

|

||||

turbo:

|

||||

type: integer

|

||||

format: int32

|

||||

gpt4:

|

||||

type: integer

|

||||

format: int32

|

||||

"gpt4-32k":

|

||||

type: integer

|

||||

format: int32

|

||||

claude:

|

||||

type: integer

|

||||

format: int32

|

||||

User:

|

||||

type: object

|

||||

properties:

|

||||

token:

|

||||

type: string

|

||||

ip:

|

||||

type: array

|

||||

items:

|

||||

type: string

|

||||

nickname:

|

||||

type: string

|

||||

type:

|

||||

type: string

|

||||

enum: ["normal", "special"]

|

||||

promptCount:

|

||||

type: integer

|

||||

format: int32

|

||||

tokenLimits:

|

||||

$ref: "#/components/schemas/TokenCount"

|

||||

tokenCounts:

|

||||

$ref: "#/components/schemas/TokenCount"

|

||||

createdAt:

|

||||

type: integer

|

||||

format: int64

|

||||

lastUsedAt:

|

||||

type: integer

|

||||

format: int64

|

||||

disabledAt:

|

||||

type: integer

|

||||

format: int64

|

||||

disabledReason:

|

||||

type: string

|

||||

expiresAt:

|

||||

type: integer

|

||||

format: int64

|

||||

@@ -0,0 +1,58 @@

|

||||

# Configuring the proxy for AWS Bedrock

|

||||

|

||||

The proxy supports AWS Bedrock models via the `/proxy/aws/claude` endpoint. There are a few extra steps necessary to use AWS Bedrock compared to the other supported APIs.

|

||||

|

||||

- [Setting keys](#setting-keys)

|

||||

- [Attaching policies](#attaching-policies)

|

||||

- [Provisioning models](#provisioning-models)

|

||||

- [Note regarding logging](#note-regarding-logging)

|

||||

|

||||

## Setting keys

|

||||

|

||||

Use the `AWS_CREDENTIALS` environment variable to set the AWS API keys.

|

||||

|

||||

Like other APIs, you can provide multiple keys separated by commas. Each AWS key, however, is a set of credentials including the access key, secret key, and region. These are separated by a colon (`:`).

|

||||

|

||||

For example:

|

||||

|

||||

```

|

||||

AWS_CREDENTIALS=AKIA000000000000000:somesecretkey:us-east-1,AKIA111111111111111:anothersecretkey:us-west-2

|

||||

```

|

||||

|

||||

## Attaching policies

|

||||

|

||||

Unless your credentials belong to the root account, the principal will need to be granted the following permissions:

|

||||

|

||||

- `bedrock:InvokeModel`

|

||||

- `bedrock:InvokeModelWithResponseStream`

|

||||

- `bedrock:GetModelInvocationLoggingConfiguration`

|

||||

- The proxy needs this to determine whether prompt/response logging is enabled. By default, the proxy won't use credentials unless it can conclusively determine that logging is disabled, for privacy reasons.

|

||||

|

||||

Use the IAM console or the AWS CLI to attach these policies to the principal associated with the credentials.

|

||||

|

||||

## Provisioning models

|

||||

|

||||

AWS does not automatically provide accounts with access to every model. You will need to provision the models you want to use, in the regions you want to use them in. You can do this from the AWS console.

|

||||

|

||||

⚠️ **Models are region-specific.** Currently AWS only offers Claude in a small number of regions. Switch to the AWS region you want to use, then go to the models page and request access to **Anthropic / Claude**.

|

||||

|

||||

|

||||

|

||||

Access is generally granted more or less instantly. Once your account has access, you can enable the model by checking the box next to it.

|

||||

|

||||

You can also request Claude Instant, but support for this isn't fully implemented yet.

|

||||

|

||||

### Supported model IDs

|

||||

Users can send these model IDs to the proxy to invoke the corresponding models.

|

||||

- **Claude**

|

||||

- `anthropic.claude-v1` (~18k context, claude 1.3 -- EOL 2024-02-28)

|

||||

- `anthropic.claude-v2` (~100k context, claude 2.0)

|

||||

- `anthropic.claude-v2:1` (~200k context, claude 2.1)

|

||||

- **Claude Instant**

|

||||

- `anthropic.claude-instant-v1` (~100k context, claude instant 1.2)

|

||||

|

||||

## Note regarding logging

|

||||

|

||||

By default, the proxy will refuse to use keys if it finds that logging is enabled, or if it doesn't have permission to check logging status.

|

||||

|

||||

If you can't attach the `bedrock:GetModelInvocationLoggingConfiguration` policy to the principal, you can set the `ALLOW_AWS_LOGGING` environment variable to `true` to force the proxy to use the keys anyway. A warning will appear on the info page when this is enabled.

|

||||

@@ -0,0 +1,30 @@

|

||||

# Configuring the proxy for Azure

|

||||

|

||||

The proxy supports Azure OpenAI Service via the `/proxy/azure/openai` endpoint. The process of setting it up is slightly different from regular OpenAI.

|

||||

|

||||

- [Setting keys](#setting-keys)

|

||||

- [Model assignment](#model-assignment)

|

||||

|

||||

## Setting keys

|

||||

|

||||

Use the `AZURE_CREDENTIALS` environment variable to set the Azure API keys.

|

||||

|

||||

Like other APIs, you can provide multiple keys separated by commas. Each Azure key, however, is a set of values including the Resource Name, Deployment ID, and API key. These are separated by a colon (`:`).

|

||||

|

||||

For example:

|

||||

```

|

||||

AZURE_CREDENTIALS=contoso-ml:gpt4-8k:0123456789abcdef0123456789abcdef,northwind-corp:testdeployment:0123456789abcdef0123456789abcdef

|

||||

```

|

||||

|

||||

## Model assignment

|

||||

Note that each Azure deployment is assigned a model when you create it in the Azure OpenAI Service portal. If you want to use a different model, you'll need to create a new deployment, and therefore a new key to be added to the AZURE_CREDENTIALS environment variable. Each credential only grants access to one model.

|

||||

|

||||

### Supported model IDs

|

||||

Users can send normal OpenAI model IDs to the proxy to invoke the corresponding models. For the most part they work the same with Azure. GPT-3.5 Turbo has an ID of "gpt-35-turbo" because Azure doesn't allow periods in model names, but the proxy should automatically convert this to the correct ID.

|

||||

|

||||

As noted above, you can only use model IDs for which a deployment has been created and added to the proxy.

|

||||

|

||||

## On content filtering

|

||||

Be aware that all Azure OpenAI Service deployments have content filtering enabled by default at a Medium level. Prompts or responses which are deemed to be inappropriate will be rejected by the API. This is a feature of the Azure OpenAI Service and not the proxy.

|

||||

|

||||

You can disable this from deployment's settings within Azure, but you would need to request an exemption from Microsoft for your organization first. See [this page](https://learn.microsoft.com/en-us/azure/ai-services/openai/how-to/content-filters) for more information.

|

||||

@@ -0,0 +1,71 @@

|

||||

# Configuring the proxy for DALL-E

|

||||

|

||||

The proxy supports DALL-E 2 and DALL-E 3 image generation via the `/proxy/openai-images` endpoint. By default it is disabled as it is somewhat expensive and potentially more open to abuse than text generation.

|

||||

|

||||

- [Updating your Dockerfile](#updating-your-dockerfile)

|

||||

- [Enabling DALL-E](#enabling-dall-e)

|

||||

- [Setting quotas](#setting-quotas)

|

||||

- [Rate limiting](#rate-limiting)

|

||||

|

||||

## Updating your Dockerfile

|

||||

If you are using a previous version of the Dockerfile supplied with the proxy, it doesn't have the necessary permissions to let the proxy save temporary files.

|

||||

|

||||

You can replace the entire thing with the new Dockerfile at [./docker/huggingface/Dockerfile](../docker/huggingface/Dockerfile) (or the equivalent for Render deployments).

|

||||

|

||||

You can also modify your existing Dockerfile; just add the following lines after the `WORKDIR` line:

|

||||

|

||||

```Dockerfile

|

||||

# Existing

|

||||

RUN git clone https://gitgud.io/khanon/oai-reverse-proxy.git /app

|

||||

WORKDIR /app

|

||||

|

||||

# Take ownership of the app directory and switch to the non-root user

|

||||

RUN chown -R 1000:1000 /app

|

||||

USER 1000

|

||||

|

||||

# Existing

|

||||

RUN npm install

|

||||

```

|

||||

|

||||

## Enabling DALL-E

|

||||

Add `dall-e` to the `ALLOWED_MODEL_FAMILIES` environment variable to enable DALL-E. For example:

|

||||

|

||||

```

|

||||

# GPT3.5 Turbo, GPT-4, GPT-4 Turbo, and DALL-E

|

||||

ALLOWED_MODEL_FAMILIES=turbo,gpt-4,gpt-4turbo,dall-e

|

||||

|

||||

# All models as of this writing

|

||||

ALLOWED_MODEL_FAMILIES=turbo,gpt4,gpt4-32k,gpt4-turbo,claude,gemini-pro,aws-claude,dall-e

|

||||

```

|

||||

|

||||

Refer to [.env.example](../.env.example) for a full list of supported model families. You can add `dall-e` to that list to enable all models.

|

||||

|

||||

## Setting quotas

|

||||

DALL-E doesn't bill by token like text generation models. Instead there is a fixed cost per image generated, depending on the model, image size, and selected quality.

|

||||

|

||||

The proxy still uses tokens to set quotas for users. The cost for each generated image will be converted to "tokens" at a rate of 100000 tokens per US$1.00. This works out to a similar cost-per-token as GPT-4 Turbo, so you can use similar token quotas for both.

|

||||

|

||||

Use `TOKEN_QUOTA_DALL_E` to set the default quota for image generation. Otherwise it works the same as token quotas for other models.

|

||||

|

||||

```

|

||||

# ~50 standard DALL-E images per refresh period, or US$2.00

|

||||

TOKEN_QUOTA_DALL_E=200000

|

||||

```

|

||||

|

||||

Refer to [https://openai.com/pricing](https://openai.com/pricing) for the latest pricing information. As of this writing, the cheapest DALL-E 3 image costs $0.04 per generation, which works out to 4000 tokens. Higher resolution and quality settings can cost up to $0.12 per image, or 12000 tokens.

|

||||

|

||||

## Rate limiting

|

||||

The old `MODEL_RATE_LIMIT` setting has been split into `TEXT_MODEL_RATE_LIMIT` and `IMAGE_MODEL_RATE_LIMIT`. Whatever value you previously set for `MODEL_RATE_LIMIT` will be used for text models.

|

||||

|

||||

If you don't specify a `IMAGE_MODEL_RATE_LIMIT`, it defaults to half of the `TEXT_MODEL_RATE_LIMIT`, to a minimum of 1 image per minute.

|

||||

|

||||

```

|

||||

# 4 text generations per minute, 2 images per minute

|

||||

TEXT_MODEL_RATE_LIMIT=4

|

||||

IMAGE_MODEL_RATE_LIMIT=2

|

||||

```

|

||||

|

||||

If a prompt is filtered by OpenAI's content filter, it won't count towards the rate limit.

|

||||

|

||||

## Hiding recent images

|

||||

By default, the proxy shows the last 12 recently generated images by users. You can hide this section by setting `SHOW_RECENT_IMAGES` to `false`.

|

||||

@@ -0,0 +1,104 @@

|

||||

# Deploy to Huggingface Space

|

||||

|

||||

**⚠️ This method is no longer recommended. Please use the [self-hosting instructions](./self-hosting.md) instead.**

|

||||

|

||||

This repository can be deployed to a [Huggingface Space](https://huggingface.co/spaces). This is a free service that allows you to run a simple server in the cloud. You can use it to safely share your OpenAI API key with a friend.

|

||||

|

||||

### 1. Get an API key

|

||||

- Go to [OpenAI](https://openai.com/) and sign up for an account. You can use a free trial key for this as long as you provide SMS verification.

|

||||

- Claude is not publicly available yet, but if you have access to it via the [Anthropic](https://www.anthropic.com/) closed beta, you can also use that key with the proxy.

|

||||

|

||||

### 2. Create an empty Huggingface Space

|

||||

- Go to [Huggingface](https://huggingface.co/) and sign up for an account.

|

||||

- Once logged in, [create a new Space](https://huggingface.co/new-space).

|

||||

- Provide a name for your Space and select "Docker" as the SDK. Select "Blank" for the template.

|

||||

- Click "Create Space" and wait for the Space to be created.

|

||||

|

||||

|

||||

|

||||

### 3. Create an empty Dockerfile

|

||||

- Once your Space is created, you'll see an option to "Create the Dockerfile in your browser". Click that link.

|

||||

|

||||

|

||||

- Paste the following into the text editor and click "Save".

|

||||

```dockerfile

|

||||

FROM node:18-bullseye-slim

|

||||

RUN apt-get update && \

|

||||

apt-get install -y git

|

||||

RUN git clone https://gitgud.io/khanon/oai-reverse-proxy.git /app

|

||||

WORKDIR /app

|

||||

RUN chown -R 1000:1000 /app

|

||||

USER 1000

|

||||

RUN npm install

|

||||

COPY Dockerfile greeting.md* .env* ./

|

||||

RUN npm run build

|

||||

EXPOSE 7860

|

||||

ENV NODE_ENV=production

|

||||

ENV NODE_OPTIONS="--max-old-space-size=12882"

|

||||

CMD [ "npm", "start" ]

|

||||

```

|

||||

- Click "Commit new file to `main`" to save the Dockerfile.

|

||||

|

||||

|

||||

|

||||

### 4. Set your API key as a secret

|

||||

- Click the Settings button in the top right corner of your repository.

|

||||

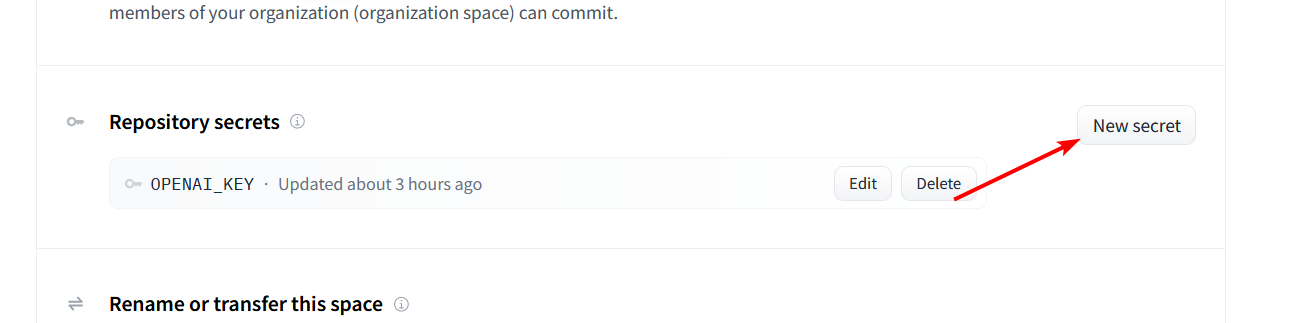

- Scroll down to the `Repository Secrets` section and click `New Secret`.

|

||||

|

||||

|

||||

|

||||

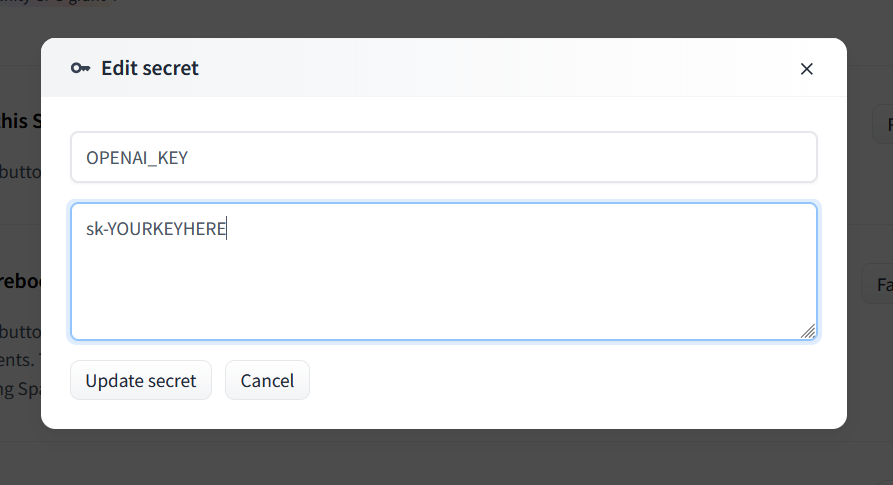

- Enter `OPENAI_KEY` as the name and your OpenAI API key as the value.

|

||||

- For Claude, set `ANTHROPIC_KEY` instead.

|

||||

- You can use both types of keys at the same time if you want.

|

||||

|

||||

|

||||

|

||||

### 5. Deploy the server

|

||||

- Your server should automatically deploy when you add the secret, but if not you can select `Factory Reboot` from that same Settings menu.

|

||||

|

||||

### 6. Share the link

|

||||

- The Service Info section below should show the URL for your server. You can share this with anyone to safely give them access to your API key.

|

||||

- Your friend doesn't need any API key of their own, they just need your link.

|

||||

|

||||

# Optional

|

||||

|

||||

## Updating the server

|

||||

|

||||

To update your server, go to the Settings menu and select `Factory Reboot`. This will pull the latest version of the code from GitHub and restart the server.

|

||||

|

||||

Note that if you just perform a regular Restart, the server will be restarted with the same code that was running before.

|

||||

|

||||

## Adding a greeting message

|

||||

|

||||

You can create a Markdown file called `greeting.md` to display a message on the Server Info page. This is a good place to put instructions for how to use the server.

|

||||

|

||||

## Customizing the server

|

||||

|

||||

The server will be started with some default configuration, but you can override it by adding a `.env` file to your Space. You can use Huggingface's web editor to create a new `.env` file alongside your Dockerfile. Huggingface will restart your server automatically when you save the file.

|

||||

|

||||

Here are some example settings:

|

||||

```shell

|

||||

# Requests per minute per IP address

|

||||

MODEL_RATE_LIMIT=4

|

||||

# Max tokens to request from OpenAI

|

||||

MAX_OUTPUT_TOKENS_OPENAI=256

|

||||

# Max tokens to request from Anthropic (Claude)

|

||||

MAX_OUTPUT_TOKENS_ANTHROPIC=512

|

||||

# Block prompts containing disallowed characters

|

||||

REJECT_DISALLOWED=false

|

||||

REJECT_MESSAGE="This content violates /aicg/'s acceptable use policy."

|

||||

```

|

||||

|

||||

See `.env.example` for a full list of available settings, or check `config.ts` for details on what each setting does.

|

||||

|

||||

## Restricting access to the server

|

||||

|

||||

If you want to restrict access to the server, you can set a `PROXY_KEY` secret. This key will need to be passed in the Authentication header of every request to the server, just like an OpenAI API key. Set the `GATEKEEPER` mode to `proxy_key`, and then set the `PROXY_KEY` variable to whatever password you want.

|

||||

|

||||

Add this using the same method as the OPENAI_KEY secret above. Don't add this to your `.env` file because that file is public and anyone can see it.

|

||||

|

||||

Example:

|

||||

```

|

||||

GATEKEEPER=proxy_key

|

||||

PROXY_KEY=your_secret_password

|

||||

```

|

||||

@@ -0,0 +1,56 @@

|

||||

# Deploy to Render.com

|

||||

|

||||

**⚠️ This method is no longer supported or recommended and may not work. Please use the [self-hosting instructions](./self-hosting.md) instead.**

|

||||

|

||||

Render.com offers a free tier that includes 750 hours of compute time per month. This is enough to run a single proxy instance 24/7. Instances shut down after 15 minutes without traffic but start up again automatically when a request is received. You can use something like https://app.checklyhq.com/ to ping your proxy every 15 minutes to keep it alive.

|

||||

|

||||

### 1. Create account

|

||||

- [Sign up for Render.com](https://render.com/) to create an account and access the dashboard.

|

||||

|

||||

### 2. Create a service using a Blueprint

|

||||

Render allows you to deploy and auutomatically configure a repository containing a [render.yaml](../render.yaml) file using its Blueprints feature. This is the easiest way to get started.

|

||||

|

||||

- Click the **Blueprints** tab at the top of the dashboard.

|

||||

- Click **New Blueprint Instance**.

|

||||

- Under **Public Git repository**, enter `https://gitlab.com/khanon/oai-proxy`.

|

||||

- Note that this is not the GitGud repository, but a mirror on GitLab.

|

||||

- Click **Continue**.

|

||||

- Under **Blueprint Name**, enter a name.

|

||||

- Under **Branch**, enter `main`.

|

||||

- Click **Apply**.

|

||||

|

||||

The service will be created according to the instructions in the `render.yaml` file. Don't wait for it to complete as it will fail due to missing environment variables. Instead, proceed to the next step.

|

||||

|

||||

### 3. Set environment variables

|

||||

- Return to the **Dashboard** tab.

|

||||

- Click the name of the service you just created, which may show as "Deploy failed".

|

||||

- Click the **Environment** tab.

|

||||

- Click **Add Secret File**.

|

||||

- Under **Filename**, enter `.env`.

|

||||

- Under **Contents**, enter all of your environment variables, one per line, in the format `NAME=value`.

|

||||

- For example, `OPENAI_KEY=sk-abc123`.

|

||||

- Click **Save Changes**.

|

||||

|

||||

**IMPORTANT:** Set `TRUSTED_PROXIES=3`, otherwise users' IP addresses will not be recorded correctly (the server will see the IP address of Render's load balancer instead of the user's real IP address).

|

||||

|

||||

The service will automatically rebuild and deploy with the new environment variables. This will take a few minutes. The link to your deployed proxy will appear at the top of the page.

|

||||

|

||||

If you want to change the URL, go to the **Settings** tab of your Web Service and click the **Edit** button next to **Name**. You can also set a custom domain, though I haven't tried this yet.

|

||||

|

||||

# Optional

|

||||

|

||||

## Updating the server

|

||||

|

||||

To update your server, go to the page for your Web Service and click **Manual Deploy** > **Deploy latest commit**. This will pull the latest version of the code and redeploy the server.

|

||||

|

||||

_If you have trouble with this, you can also try selecting **Clear build cache & deploy** instead from the same menu._

|

||||

|

||||

## Adding a greeting message

|

||||

|

||||

To show a greeting message on the Server Info page, set the `GREETING_URL` environment variable within Render to the URL of a Markdown file. This URL should point to a raw text file, not an HTML page. You can use a public GitHub Gist or GitLab Snippet for this. For example: `GREETING_URL=https://gitlab.com/-/snippets/2542011/raw/main/greeting.md`. You can change the title of the page by setting the `SERVER_TITLE` environment variable.

|

||||

|

||||

Don't set `GREETING_URL` in the `.env` secret file you created earlier; it must be set in Render's environment variables section for it to work correctly.

|

||||

|

||||

## Customizing the server

|

||||

|

||||

You can customize the server by editing the `.env` configuration you created earlier. Refer to [.env.example](../.env.example) for a list of all available configuration options. Further information can be found in the [config.ts](../src/config.ts) file.

|

||||

@@ -0,0 +1,35 @@

|

||||

# Configuring the proxy for Vertex AI (GCP)

|

||||

|

||||

The proxy supports GCP models via the `/proxy/gcp/claude` endpoint. There are a few extra steps necessary to use GCP compared to the other supported APIs.

|

||||

|

||||

- [Setting keys](#setting-keys)

|

||||

- [Setup Vertex AI](#setup-vertex-ai)

|

||||

- [Supported model IDs](#supported-model-ids)

|

||||

|

||||

## Setting keys

|

||||

|

||||

Use the `GCP_CREDENTIALS` environment variable to set the GCP API keys.

|

||||

|

||||

Like other APIs, you can provide multiple keys separated by commas. Each GCP key, however, is a set of credentials including the project id, client email, region and private key. These are separated by a colon (`:`).

|

||||

|

||||

For example:

|

||||

|

||||

```

|

||||

GCP_CREDENTIALS=my-first-project:xxx@yyy.com:us-east5:-----BEGIN PRIVATE KEY-----xxx-----END PRIVATE KEY-----,my-first-project2:xxx2@yyy.com:us-east5:-----BEGIN PRIVATE KEY-----xxx-----END PRIVATE KEY-----

|

||||

```

|

||||

|

||||

## Setup Vertex AI

|

||||

1. Go to [https://cloud.google.com/vertex-ai](https://cloud.google.com/vertex-ai) and sign up for a GCP account. ($150 free credits without credit card or $300 free credits with credit card, credits expire in 90 days)

|

||||

2. Go to [https://console.cloud.google.com/marketplace/product/google/aiplatform.googleapis.com](https://console.cloud.google.com/marketplace/product/google/aiplatform.googleapis.com) to enable Vertex AI API.

|

||||

3. Go to [https://console.cloud.google.com/vertex-ai](https://console.cloud.google.com/vertex-ai) and navigate to Model Garden to apply for access to the Claude models.

|

||||

4. Create a [Service Account](https://console.cloud.google.com/projectselector/iam-admin/serviceaccounts/create?walkthrough_id=iam--create-service-account#step_index=1) , and make sure to grant the role of "Vertex AI User" or "Vertex AI Administrator".

|

||||

5. On the service account page you just created, create a new key and select "JSON". The JSON file will be downloaded automatically.

|

||||

6. The required credential is in the JSON file you just downloaded.

|

||||

|

||||

## Supported model IDs

|

||||

Users can send these model IDs to the proxy to invoke the corresponding models.

|

||||

- **Claude**

|

||||

- `claude-3-haiku@20240307`

|

||||

- `claude-3-sonnet@20240229`

|

||||

- `claude-3-opus@20240229`

|

||||

- `claude-3-5-sonnet@20240620`

|

||||

@@ -0,0 +1,61 @@

|

||||

# Warning

|

||||

**I strongly suggest against using this feature with a Google account that you care about.** Depending on the content of the prompts people submit, Google may flag the spreadsheet as containing inappropriate content. This seems to prevent you from sharing that spreadsheet _or any others on the account. This happened with my throwaway account during testing; the existing shared spreadsheet continues to work but even completely new spreadsheets are flagged and cannot be shared.

|

||||

|

||||

I'll be looking into alternative storage backends but you should not use this implementation with a Google account you care about, or even one remotely connected to your main accounts (as Google has a history of linking accounts together via IPs/browser fingerprinting). Use a VPN and completely isolated VM to be safe.

|

||||

|

||||

# Configuring Google Sheets Prompt Logging

|

||||

This proxy can log incoming prompts and model responses to Google Sheets. Some configuration on the Google side is required to enable this feature. The APIs used are free, but you will need a Google account and a Google Cloud Platform project.

|

||||

|

||||

NOTE: Concurrency is not supported. Don't connect two instances of the server to the same spreadsheet or bad things will happen.

|

||||

|

||||

## Prerequisites

|

||||

- A Google account

|

||||

- **USE A THROWAWAY ACCOUNT!**

|

||||

- A Google Cloud Platform project

|

||||

|

||||

### 0. Create a Google Cloud Platform Project

|

||||

_A Google Cloud Platform project is required to enable programmatic access to Google Sheets. If you already have a project, skip to the next step. You can also see the [Google Cloud Platform documentation](https://developers.google.com/workspace/guides/create-project) for more information._

|

||||

|

||||

- Go to the Google Cloud Platform Console and [create a new project](https://console.cloud.google.com/projectcreate).

|

||||

|

||||

### 1. Enable the Google Sheets API

|

||||

_The Google Sheets API must be enabled for your project. You can also see the [Google Sheets API documentation](https://developers.google.com/sheets/api/quickstart/nodejs) for more information._

|

||||

|

||||

- Go to the [Google Sheets API page](https://console.cloud.google.com/apis/library/sheets.googleapis.com) and click **Enable**, then fill in the form to enable the Google Sheets API for your project.

|

||||

<!-- TODO: Add screenshot of Enable page and describe filling out the form -->

|

||||

|

||||

### 2. Create a Service Account

|

||||

_A service account is required to authenticate the proxy to Google Sheets._

|

||||

|

||||

- Once the Google Sheets API is enabled, click the **Credentials** tab on the Google Sheets API page.

|

||||

- Click **Create credentials** and select **Service account**.

|

||||

- Provide a name for the service account and click **Done** (the second and third steps can be skipped).

|

||||

|

||||

### 3. Download the Service Account Key

|

||||

_Once your account is created, you'll need to download the key file and include it in the proxy's secrets configuration._

|

||||

|

||||

- Click the Service Account you just created in the list of service accounts for the API.

|

||||

- Click the **Keys** tab and click **Add key**, then select **Create new key**.

|

||||

- Select **JSON** as the key type and click **Create**.

|

||||

|

||||

The JSON file will be downloaded to your computer.

|

||||

|

||||

### 4. Set the Service Account key as a Secret

|

||||

_The JSON key file must be set as a secret in the proxy's configuration. Because files cannot be included in the secrets configuration, you'll need to base64 encode the file's contents and paste the encoded string as the value of the `GOOGLE_SHEETS_KEY` secret._

|

||||

|

||||

- Open the JSON key file in a text editor and copy the contents.

|

||||

- Visit the [base64 encode/decode tool](https://www.base64encode.org/) and paste the contents into the box, then click **Encode**.

|

||||

- Copy the encoded string and paste it as the value of the `GOOGLE_SHEETS_KEY` secret in the deployment's secrets configuration.

|

||||

- **WARNING:** Don't reveal this string publically. The `.env` file is NOT private -- unless you're running the proxy locally, you should not use it to store secrets!

|

||||

|

||||

### 5. Create a new spreadsheet and share it with the service account

|

||||

_The service account must be given permission to access the logging spreadsheet. Each service account has a unique email address, which can be found in the JSON key file; share the spreadsheet with that email address just as you would share it with another user._

|

||||

|

||||

- Open the JSON key file in a text editor and copy the value of the `client_email` field.

|

||||

- Open the spreadsheet you want to log to, or create a new one, and click **File > Share**.

|

||||

- Paste the service account's email address into the **Add people or groups** field. Ensure the service account has **Editor** permissions, then click **Done**.

|

||||

|

||||

### 6. Set the spreadsheet ID as a Secret

|

||||

_The spreadsheet ID must be set as a secret in the proxy's configuration. The spreadsheet ID can be found in the URL of the spreadsheet. For example, the spreadsheet ID for `https://docs.google.com/spreadsheets/d/1X2Y3Z/edit#gid=0` is `1X2Y3Z`. The ID isn't necessarily a sensitive value if you intend for the spreadsheet to be public, but it's still recommended to set it as a secret._

|

||||

|

||||

- Copy the spreadsheet ID and paste it as the value of the `GOOGLE_SHEETS_SPREADSHEET_ID` secret in the deployment's secrets configuration.

|

||||

@@ -0,0 +1,135 @@

|

||||

# Proof-of-work Verification

|

||||

|

||||

You can require users to complete a proof-of-work before they can access the

|

||||

proxy. This can increase the cost of denial of service attacks and slow down

|

||||

automated abuse.

|

||||

|

||||

When configured, users access the challenge UI and request a token. The server

|

||||

sends a challenge to the client, which asks the user's browser to find a

|

||||

solution to the challenge that meets a certain constraint (the difficulty

|

||||

level). Once the user has found a solution, they can submit it to the server

|

||||

and get a user token valid for a period you specify.

|

||||

|

||||

The proof-of-work challenge uses the argon2id hash function.

|

||||

|

||||

## Configuration

|

||||

|

||||

To enable proof-of-work verification, set the following environment variables:

|

||||

|

||||

```

|

||||

GATEKEEPER=user_token

|

||||

CAPTCHA_MODE=proof_of_work

|

||||

# Validity of the token in hours

|

||||

POW_TOKEN_HOURS=24

|

||||

# Max number of IPs that can use a user_token issued via proof-of-work

|

||||

POW_TOKEN_MAX_IPS=2

|

||||

# The difficulty level of the proof-of-work challenge. You can use one of the

|

||||

# predefined levels specified below, or you can specify a custom number of

|

||||

# expected hash iterations.

|

||||

POW_DIFFICULTY_LEVEL=low

|

||||

# The time limit for solving the challenge, in minutes

|

||||

POW_CHALLENGE_TIMEOUT=30

|

||||

```

|

||||

|

||||

## Difficulty Levels

|

||||

|

||||

The difficulty level controls how long, on average, it will take for a user to

|

||||

solve the proof-of-work challenge. Due to randomness, the actual time can very

|

||||

significantly; lucky users may solve the challenge in a fraction of the average

|

||||

time, while unlucky users may take much longer.

|

||||

|

||||

The difficulty level doesn't affect the speed of the hash function itself, only

|

||||

the number of hashes that will need to be computed. Therefore, the time required

|

||||

to complete the challenge scales linearly with the difficulty level's iteration

|

||||

count.

|

||||

|

||||

You can adjust the difficulty level while the proxy is running from the admin

|

||||

interface.

|

||||

|

||||

Be aware that there is a time limit for solving the challenge, by default set to

|

||||

30 minutes. Above 'high' difficulty, you will probably need to increase the time

|

||||

limit or it will be very hard for users with slow devices to find a solution

|

||||

within the time limit.

|

||||

|

||||

### Low

|

||||

|

||||

- Average of 200 iterations required

|

||||

- Default setting.

|

||||

|

||||

### Medium

|

||||

|

||||

- Average of 900 iterations required

|

||||

|

||||

### High

|

||||

|

||||

- Average of 1900 iterations required

|

||||

|

||||

### Extreme

|

||||

|

||||

- Average of 4000 iterations required

|

||||

- Not recommended unless you are expecting very high levels of abuse

|

||||

- May require increasing `POW_CHALLENGE_TIMEOUT`

|

||||

|

||||

### Custom

|

||||

|

||||

Setting `POW_DIFFICULTY_LEVEL` to an integer will use that number of iterations

|

||||

as the difficulty level.

|

||||

|

||||

## Other challenge settings

|

||||

|

||||

- `POW_CHALLENGE_TIMEOUT`: The time limit for solving the challenge, in minutes.

|

||||

Default is 30.

|

||||

- `POW_TOKEN_HOURS`: The period of time for which a user token issued via proof-

|

||||

of-work can be used. Default is 24 hours. Starts when the challenge is solved.

|

||||

- `POW_TOKEN_MAX_IPS`: The maximum number of unique IPs that can use a single

|

||||

user token issued via proof-of-work. Default is 2.

|

||||

- `POW_TOKEN_PURGE_HOURS`: The period of time after which an expired user token

|

||||

issued via proof-of-work will be removed from the database. Until it is

|

||||

purged, users can refresh expired tokens by completing a half-difficulty

|

||||

challenge. Default is 48 hours.

|

||||

- `POW_MAX_TOKENS_PER_IP`: The maximum number of active user tokens that can

|

||||

be associated with a single IP address. After this limit is reached, the

|

||||

oldest token will be forcibly expired when a new token is issued. Set to 0

|

||||

to disable this feature. Default is 0.

|

||||

|

||||

## Custom argon2id parameters

|

||||

|

||||

You can set custom argon2id parameters for the proof-of-work challenge.

|

||||

Generally, you should not need to change these unless you have a specific

|

||||

reason to do so.

|

||||

|

||||

The listed values are the defaults.

|

||||

|

||||

```

|

||||

ARGON2_TIME_COST=8

|

||||

ARGON2_MEMORY_KB=65536

|

||||

ARGON2_PARALLELISM=1

|

||||

ARGON2_HASH_LENGTH=32

|

||||

```

|

||||

|

||||

Increasing parallelism will not do much except increase memory consumption for

|

||||

both the client and server, because browser proof-of-work implementations are

|

||||

single-threaded. It's better to increase the time cost if you want to increase

|

||||

the difficulty.

|

||||

|

||||

Increasing memory too much may cause memory exhaustion on some mobile devices,

|

||||

particularly on iOS due to the way Safari handles WebAssembly memory allocation.

|

||||

|

||||

## Tested hash rates

|

||||

|

||||

These were measured with the default argon2id parameters listed above. These

|

||||

tests were not at all scientific so take them with a grain of salt.

|

||||

|

||||

Safari does not like large WASM memory usage, so concurrency is limited to 4 to

|

||||

avoid overallocating memory on mobile WebKit browsers. Thermal throttling can

|

||||

also significantly reduce hash rates on mobile devices.

|

||||

|

||||

- Intel Core i9-13900K (Chrome): 33-35 H/s

|

||||

- Intel Core i9-13900K (Firefox): 29-32 H/s

|

||||

- Intel Core i9-13900K (Chrome, in VM limited to 4 cores): 12.2 - 13.0 H/s

|

||||

- iPad Pro (M2) (Safari, 6 workers): 8.0 - 10 H/s

|

||||

- Thermal throttles early. 8 cores is normal concurrency, but unstable.

|

||||

- iPhone 15 Pro Max (Safari): 4.0 - 4.6 H/s

|

||||

- Samsung Galaxy S10e (Chrome): 3.6 - 3.8 H/s

|

||||

- This is a 2019 phone almost matching an iPhone five years newer because of

|

||||

bad Safari performance.

|

||||

@@ -0,0 +1,150 @@

|

||||

# Quick self-hosting guide

|

||||

|

||||

Temporary guide for self-hosting. This will be improved in the future to provide more robust instructions and options. Provided commands are for Ubuntu.

|

||||

|

||||

This uses prebuilt Docker images for convenience. If you want to make adjustments to the code you can instead clone the repo and follow the Local Development guide in the [README](../README.md).

|

||||

|

||||

## Table of Contents

|

||||

- [Requirements](#requirements)

|

||||

- [Running the application](#running-the-application)

|

||||

- [Setting up a reverse proxy](#setting-up-a-reverse-proxy)

|

||||

- [trycloudflare](#trycloudflare)

|

||||

- [nginx](#nginx)

|

||||

- [Example basic nginx configuration (no SSL)](#example-basic-nginx-configuration-no-ssl)

|

||||

- [Example with Cloudflare SSL](#example-with-cloudflare-ssl)

|

||||

- [Updating/Restarting the application](#updatingrestarting-the-application)

|

||||

|

||||

## Requirements

|

||||

|

||||

- Docker

|

||||

- Docker Compose

|

||||

- A VPS with at least 512MB of RAM (1GB recommended)

|

||||

- A domain name

|

||||

|

||||

If you don't have a VPS and domain name you can use TryCloudflare to set up a temporary URL that you can share with others. See [trycloudflare](#trycloudflare) for more information.

|

||||

|

||||

## Running the application

|

||||

|

||||

- Install Docker and Docker Compose

|

||||

- Create a new directory for the application

|

||||

- This will contain your .env file, greeting file, and any user-generated files

|

||||

- Execute the following commands:

|

||||

- ```

|

||||

touch .env

|

||||

touch greeting.md

|

||||

echo "OPENAI_KEY=your-openai-key" >> .env

|

||||

curl https://gitgud.io/khanon/oai-reverse-proxy/-/raw/main/docker/docker-compose-selfhost.yml -o docker-compose.yml

|

||||

```

|

||||

- You can set further environment variables and keys in the `.env` file. See [.env.example](../.env.example) for a list of available options.

|

||||

- You can set a custom greeting in `greeting.md`. This will be displayed on the homepage.

|

||||

- Run `docker compose up -d`

|

||||

|

||||

You can check logs with `docker compose logs -n 100 -f`.

|

||||

|

||||

The provided docker-compose file listens on port 7860 but binds to localhost only. You should use a reverse proxy to expose the application to the internet as described in the next section.

|

||||

|

||||

## Setting up a reverse proxy

|

||||

|

||||

Rather than exposing the application directly to the internet, it is recommended to set up a reverse proxy. This will allow you to use HTTPS and add additional security measures.

|

||||

|

||||

### trycloudflare

|

||||

|

||||

This will give you a temporary (72 hours) URL that you can use to let others connect to your instance securely, without having to set up a reverse proxy. If you are running the server on your home network, this is probably the best option.

|

||||

- Install `cloudflared` following the instructions at [try.cloudflare.com](https://try.cloudflare.com/).

|

||||

- Run `cloudflared tunnel --url http://localhost:7860`

|

||||

- You will be given a temporary URL that you can share with others.

|

||||

|

||||

If you have a VPS, you should use a proper reverse proxy like nginx instead for a more permanent solution which will allow you to use your own domain name, handle SSL, and add additional security/anti-abuse measures.

|

||||

|

||||

### nginx

|

||||

|

||||

First, install nginx.

|

||||

- `sudo apt update && sudo apt install nginx`

|

||||

|

||||

#### Example basic nginx configuration (no SSL)

|

||||

|

||||

- `sudo nano /etc/nginx/sites-available/oai.conf`

|

||||

- ```

|

||||

server {

|

||||

listen 80;

|

||||

server_name example.com;

|

||||

|

||||

location / {

|

||||

proxy_pass http://localhost:7860;

|

||||

}

|

||||

}

|

||||

```

|

||||

- Replace `example.com` with your domain name.

|

||||

- Ctrl+X to exit, Y to save, Enter to confirm.

|

||||

- `sudo ln -s /etc/nginx/sites-available/oai.conf /etc/nginx/sites-enabled`

|

||||

- `sudo nginx -t`

|

||||

- This will check the configuration file for errors.

|

||||

- `sudo systemctl restart nginx`

|

||||

- This will restart nginx and apply the new configuration.

|

||||

|

||||

#### Example with Cloudflare SSL

|

||||

|

||||

This allows you to use a self-signed certificate on the server, and have Cloudflare handle client SSL. You need to have a Cloudflare account and have your domain set up with Cloudflare already, pointing to your server's IP address.

|

||||

|

||||

- Set Cloudflare to use Full SSL mode. Since we are using a self-signed certificate, don't use Full (strict) mode.

|

||||

- Create a self-signed certificate:

|

||||

- `openssl req -x509 -nodes -days 365 -newkey rsa:2048 -keyout /etc/ssl/private/nginx-selfsigned.key -out /etc/ssl/certs/nginx-selfsigned.crt`

|

||||

- `sudo nano /etc/nginx/sites-available/oai.conf`

|

||||

- ```

|

||||

server {

|

||||

listen 443 ssl;

|

||||

server_name yourdomain.com www.yourdomain.com;

|

||||

|

||||

ssl_certificate /etc/ssl/certs/nginx-selfsigned.crt;

|

||||

ssl_certificate_key /etc/ssl/private/nginx-selfsigned.key;

|

||||

|

||||

# Only allow inbound traffic from Cloudflare

|

||||

allow 173.245.48.0/20;

|

||||

allow 103.21.244.0/22;

|

||||

allow 103.22.200.0/22;

|

||||

allow 103.31.4.0/22;

|

||||

allow 141.101.64.0/18;

|

||||

allow 108.162.192.0/18;

|

||||

allow 190.93.240.0/20;

|

||||

allow 188.114.96.0/20;

|

||||

allow 197.234.240.0/22;

|

||||

allow 198.41.128.0/17;

|

||||

allow 162.158.0.0/15;

|

||||

allow 104.16.0.0/13;

|

||||

allow 104.24.0.0/14;

|

||||

allow 172.64.0.0/13;

|

||||

allow 131.0.72.0/22;

|

||||

deny all;

|

||||

|

||||

location / {

|

||||

proxy_pass http://localhost:7860;

|

||||

proxy_http_version 1.1;

|

||||

proxy_set_header Upgrade $http_upgrade;

|

||||

proxy_set_header Connection 'upgrade';

|

||||

proxy_set_header Host $host;

|

||||

proxy_cache_bypass $http_upgrade;

|

||||

}

|

||||

|

||||

ssl_protocols TLSv1.2 TLSv1.3;

|

||||

ssl_ciphers 'ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256';

|

||||

ssl_prefer_server_ciphers on;

|

||||

ssl_session_cache shared:SSL:10m;

|

||||

}

|

||||

```

|

||||

- Replace `yourdomain.com` with your domain name.

|

||||

- Ctrl+X to exit, Y to save, Enter to confirm.

|

||||

- `sudo ln -s /etc/nginx/sites-available/oai.conf /etc/nginx/sites-enabled`

|

||||

|

||||

## Updating/Restarting the application

|

||||

|

||||

After making an .env change, you need to restart the application for it to take effect.

|

||||

|

||||

- `docker compose down`

|

||||

- `docker compose up -d`

|

||||

|

||||

To update the application to the latest version:

|

||||

|

||||

- `docker compose pull`

|

||||

- `docker compose down`

|

||||

- `docker compose up -d`

|

||||

- `docker image prune -f`

|

||||

@@ -0,0 +1,85 @@

|

||||

# User Management

|

||||

|

||||

The proxy supports several different user management strategies. You can choose the one that best fits your needs by setting the `GATEKEEPER` environment variable.

|

||||

|

||||

Several of these features require you to set secrets in your environment. If using Huggingface Spaces to deploy, do not set these in your `.env` file because that file is public and anyone can see it.

|

||||

|

||||

## Table of Contents

|

||||

|

||||

- [No user management](#no-user-management-gatekeepernone)

|

||||

- [Single-password authentication](#single-password-authentication-gatekeeperproxy_key)

|

||||

- [Per-user authentication](#per-user-authentication-gatekeeperuser_token)

|

||||

- [Memory](#memory)

|

||||

- [Firebase Realtime Database](#firebase-realtime-database)

|

||||

- [Firebase setup instructions](#firebase-setup-instructions)

|

||||

- [SQLite Database](#sqlite-database)

|

||||

- [Whitelisting admin IP addresses](#whitelisting-admin-ip-addresses)

|

||||

|

||||

## No user management (`GATEKEEPER=none`)

|

||||

|

||||

This is the default mode. The proxy will not require any authentication to access the server and offers basic IP-based rate limiting and anti-abuse features.

|

||||

|

||||